Storing data as Knowledge Graphs

1989, CERN Switzerland

Tim Berners-Lee

inventor of the World Wide Web

The Web's foundational ideas

-

Data is linked to each other

Across heterogeneous systems (decentralized)

-

Browser programs

Visualises data and helps users navigating the Web

-

Everyone can read data

Using Web browsers

-

Open standards

Anyone can implement tools (browsers, ...) on top of it

1990-... World-wide adoption

Not just for researchers anymore

The Web is a global information space

a.k.a. The World Wide Web (WWW)

Mostly used by humans through Web browsers

Web is focused on humans

-

Web pages show information

Visualized using Web browsers

-

Clicking on links

To discover new information

-

Search information

Using search engines such as Google, Bing, ...

Achieving tasks requires manual effort

-

Will it rain next week?

- Find a weather prediction website

- Select your location

- Navigate to next week

-

Book a trip for a group of people

- Comparing agendas

- Comparing interests: nature, musea, ...

- Regional temperatures

- Geopolitical status

- ...

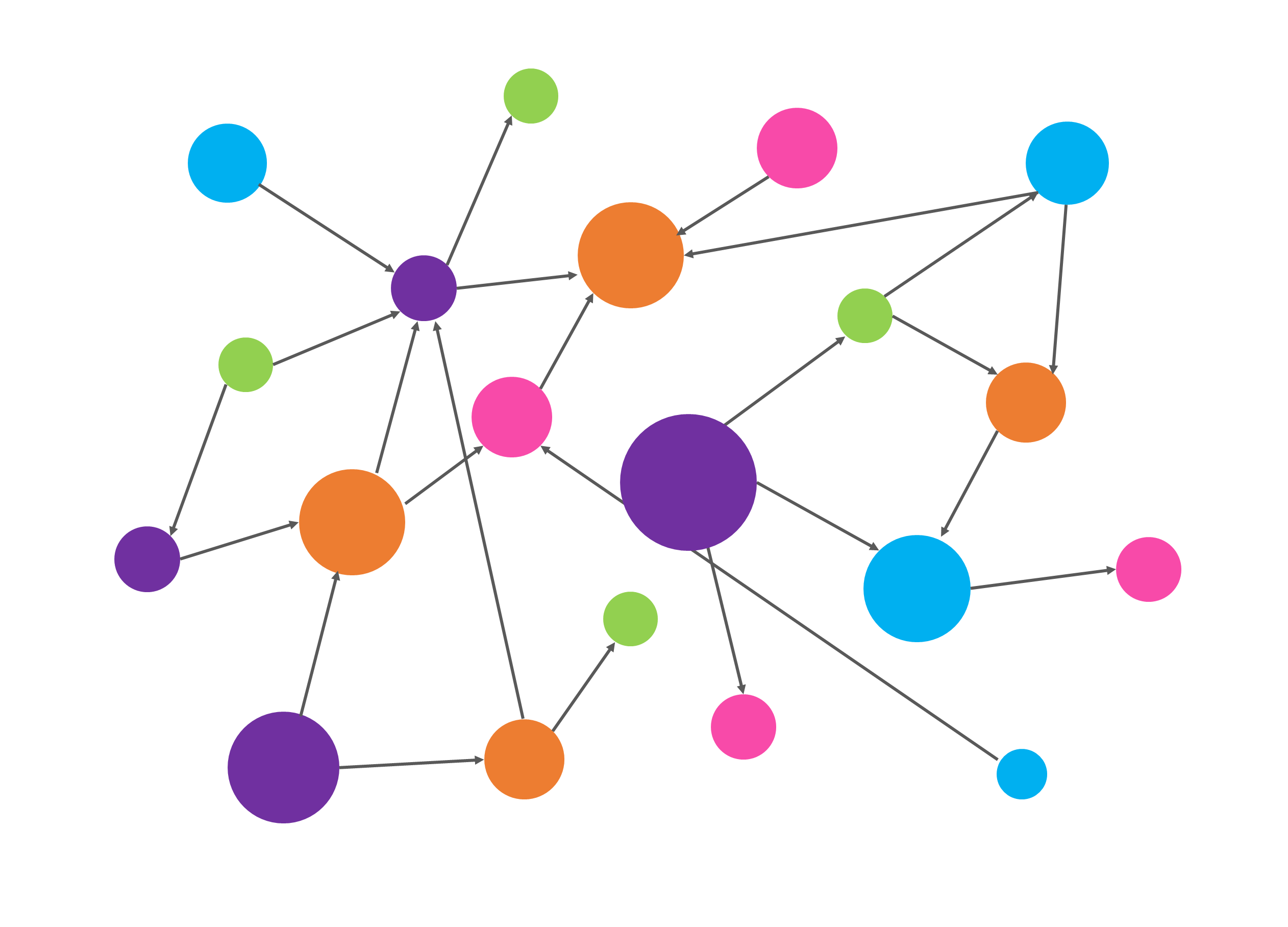

What if intelligent agents

could do these tasks for us?

I Have a Dream

A Web where intelligent

agents are able to achieve

our day-to-day tasks.

2001

The Semantic Web will bring structure to the meaningful content of Web pages, creating an environment where software agents roaming from page to page can readily carry out sophisticated tasks for users.

The dream: Getting there step by step

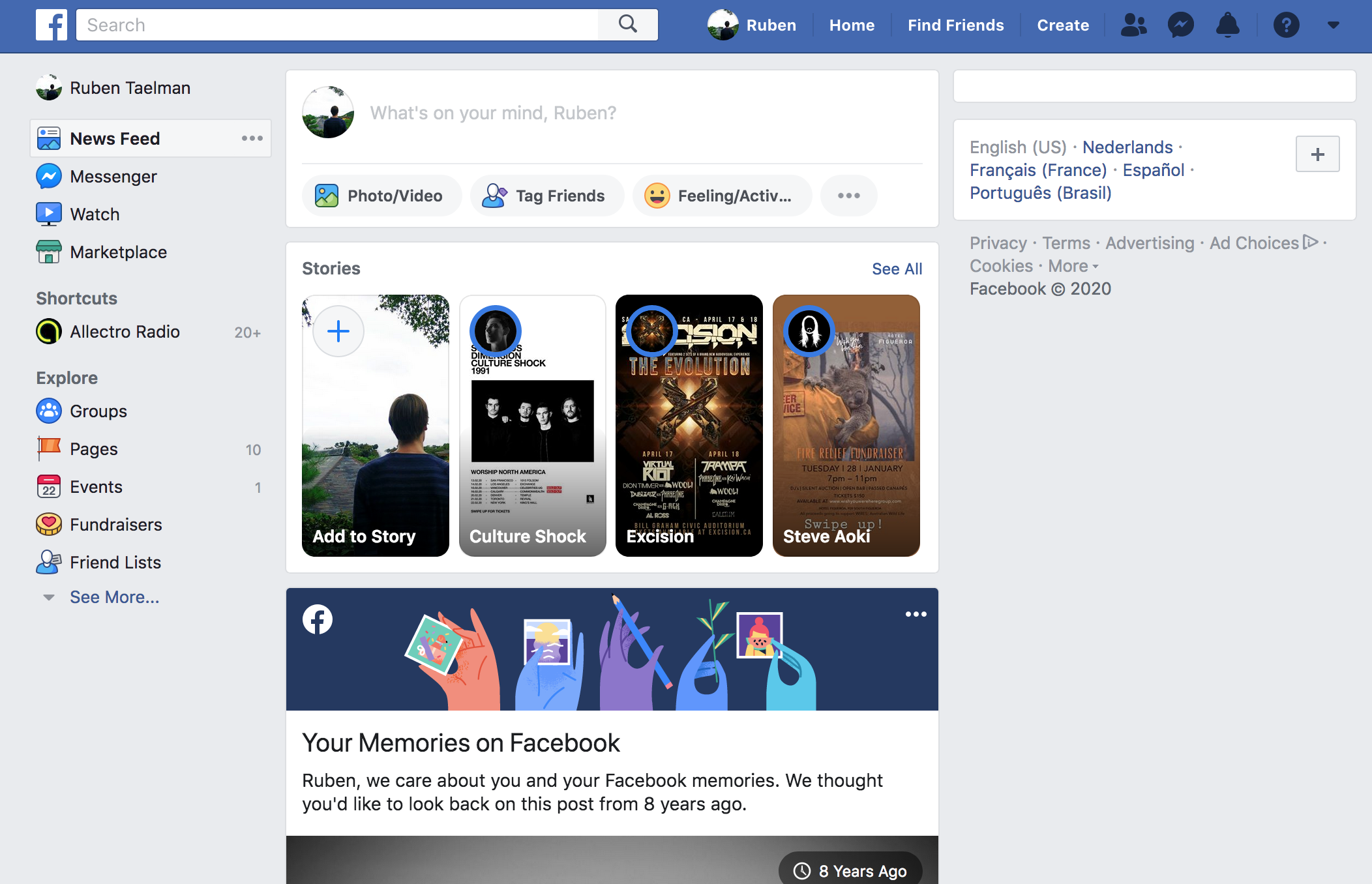

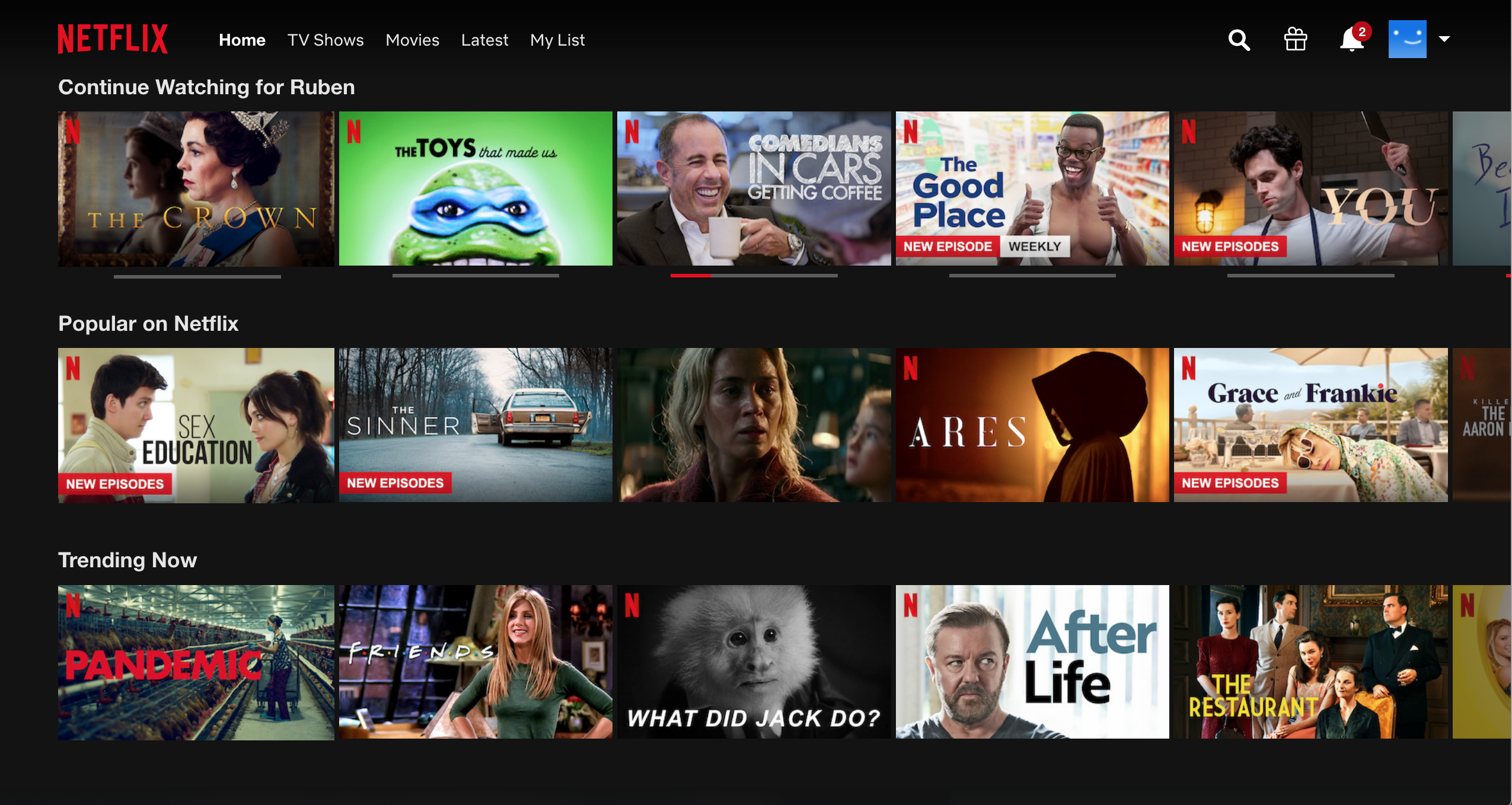

2015: Agents are being introduced that can perform simple tasks

Google Assistant, Alexa, Siri, ...

Google Assistant, Alexa, Siri, ...

How do these agents work?

→ Execute Queries over Knowledge Graphs

Query = Structured Question

Knowledge Graph = Structured information

→ Structured questions over structured information

A Knowledge Graph is

a collection of structured information

Semantic Web technologies

-

RDF

Standard for representing and exchanging graph data

Knowledge Graphs are collections of such graph data

-

SPARQL

Standard for querying over RDF data

Generative AI models

2022: LLMs offer human-like conversations based on crawled Web data

Differences to Knowledge Graphs:

- Adaptive understanding of unstructured data

- Inconsistent answers and hallucinations

- Black box

Agentic AI: multi-step agents

2025: LLMs act as orchestrators that use tools

Combines the best of both worlds:

- Creativity and generative nature of LLMs

- Precision of external tools (e.g. calc), Knowledge Graphs (neurosymbolic AI), …

RDF enables data exchange on the Web

-

RDF

Resource Description framework

-

Recommended by W3C since 1999

-

Recommended by W3C since 2014

-

Upcoming in 2026

Facts are represented as RDF triples

Multiple RDF triples form RDF datasets

:Alice :knows :Bob .

:Alice :knows :Carol .

:Alice :name "Alice" .

:Bob :name "Bob" .

:Bob :knows :Carol .

<

RDF Resources are identified by IRIs

https://example.org/Alice

-

IRI

Internationalized Resource Identifier

-

Global identifiers

Can be used by different data sources → interlinking!

-

IRIs can be URLs

A URL is an IRI that can be dereferenced (e.g. looked up in Web browsers).

Allows additional information to be looked up for a resource.

Multiple syntaxes exist for RDF

Turtle

PREFIX dbr: <http://dbpedia.org/resource/>

PREFIX foaf: <http://xmlns.com/foaf/0.1/>

dbr:Alice foaf:knows dbr:Bob .

dbr:Alice foaf:name "Alice" .

JSON-LD

{

"@context": {

"dbr": "http://dbpedia.org/resource/",

"foaf": "http://xmlns.com/foaf/0.1/"

},

"@id": "dbr:Alice",

"foaf:knows": "dbr:Bob",

"foaf:name": "Alice"

}

SPARQL querying over RDF datasets

- SPARQL: language to read and update RDF datasets via declarative queries.

-

Different query forms:

SELECT: selecting values in tabular form → focus of this presentationCONSTRUCT: construct new triplesASK: check if data existsDESCRIBE: describe a given resourceINSERT: insert new triplesDELETE: delete existing triples

Specification: https://www.w3.org/TR/sparql-query/

| name | deathDate |

| Albert Joseph Moore | |

| Charles Francis Hansom | 1888 |

| David Reed (comedian) | |

| Dustin Gee | |

| E Ridsdale Tate | 1922 |

How do query engines process a query?

RDF dataset + SPARQL query

↓

...

↓

query results

How do query engines process a query?

RDF dataset + SPARQL query

↓

SPARQL query processing

↓

query results

Basic Graph Patterns enable graph pattern matching

Query results representation

-

Solution Mapping

Mapping from a set of variable labels to a set of RDF terms.

-

Solution Sequence

A list of solution mappings.

1 solution sequence with 3 solution mappings:

| name | birthplace |

| Bob Brockmann | http://dbpedia.org/resource/Louisiana |

| Bennie Nawahi | http://dbpedia.org/resource/Honolulu |

| Weird Al Yankovic | http://dbpedia.org/resource/Downey,_California |

Steps in SPARQL query processing

-

1. Parsing

Transform a SPARQL query string into an algebra expression

-

2. Optimization

Transform algebra expression into a query plan

-

3. Evaluation

Executes query plan to obtain query results

Publishing Knowledge Graphs as SPARQL Endpoints

SPARQL endpoint: API that accepts SPARQL queries, and replies with results.

-

Most popular way to publish Knowledge Graphs

Alternatives are data dumps and Linked Data Documents

-

Very powerful

Very complex queries can be formulated with SPARQL

-

Power comes with a cost

SPARQL endpoints can require very powerful servers

SPARQL processing over centralized data

-

Dataset is collocated with query engine

All data is known beforehand

-

Single dataset

Combining multiple datasets is hard

Centralization not always possible

-

Private data

Technical and legal reasons

-

Evolving data

Requires continuous re-indexing

-

Web scale data

Indexing the whole Web is infeasible (for non-tech-giants)

How to query over decentralized data?

-

Data and query engine are not collocated

Query engine runs on a separate machine

-

Not just one datasets

Data is spread over the Web into multiple documents

Approaches for querying over decentralized data

-

Federated Query Processing

Distributing query execution across known sources

-

Link Traversal Query Processing

Local query execution over sources that are discovered by following links

Client distributes query over query APIs

-

Clients do limited effort

Split up the query, distribute it (source selection), and combine results

-

Servers perform most of the effort

They actually execute the queries, over potentially huge datasets

Federation over SPARQL endpoints

-

Servers are SPARQL endpoints (most common)

They accept any valid SPARQL query

-

Client-side source selection

Rewrite query in terms of SERVICE clauses

SELECT ?drug ?title WHERE {

?drug db:drugCategory dbc:micronutrient.

?drug db:casRegistryNumber ?id.

?keggDrug rdf:type kegg :Drug.

?keggDrug bio2rdf:xRef ?id.

?keggDrug purl:title ?title.

}

SELECT ?drug ?title WHERE {

SERVICE <http://example.com/drb> {

?drug db:drugCategory dbc:micronutrient.

?drug db:casRegistryNumber ?id.

}

SERVICE <http://example.com/kegg> {

?keggDrug rdf:type kegg :Drug.

?keggDrug bio2rdf:xRef ?id.

?keggDrug purl:title ?title.

}

}

Federation over heterogeneous sources

-

Servers are not only SPARQL endpoints

Other types of Linked Data Fragments: TPF, WiseKG, brTPF, ...

Different levels of server expressivity

-

Clients may have to take up more effort

Executing parts of queries client-side

-

Trade-off between server and client effort

Low-cost publishing and preventing server availability issues

Limitations of federated querying

-

All federation members must be known before execution starts

Source selection distributes query across list of sources

No discovery of new sources

-

Limited scalability in terms of number of endpoints

Current federation techniques scale to the order of 10 sources

-

Public endpoints have restrictions

Timeouts, rate limits, …

Current federation techniques can not cope with them

Exploit interlinking of documents

-

Linked Data documents are linked to each other

Following the Linked Data principles

-

Query engine can follow links

Start from one document, and discover new documents on the fly

Example: decentralized address book

Example: Find Alice's contact names

SELECT ?name WHERE {

<https://alice.pods.org/profile#me>

foaf:knows ?person.

?person foaf:name ?name.

}

Query process:

- Start from Alice's address book

- Follow links to profiles of Bob and Carol

- Query over union of all profiles

- Find query results:

[ { "name": "Bob" }, { "name": "Carol" } ]

The Link Traversal Query Process

Link queue: stores links to documents that need to be fetched

-

1. Initialize link queue with seed URLs

Seed URLs can be user-defined, or derived from query

-

2. Iterate and append link queue

Iteratively pop head off queue, and follow link to document

Add all URLs in document to link queue

-

3. Execute query

Over union of all discovered RDF triples

Link Traversal: too slow for querying over Linked Open Data

-

Massive number of possible links on the open Web

New content is produced faster than you can follow links

-

Inefficient query plans

Traversal engine can not optimize sufficiently due to lack of statistics

Link Traversal becomes feasible with structural assumptions

-

Environments with structural properties

Subsets of the Web that follow specific structures

-

Traversal engines can make additional assumptions

Assumptions about how data is structured and interlinked

→ Guided link traversal

Solid pods follow structural properties

-

Pod contents listed through as REST API

Linked Data Platform

-

WebID profile

User name and link to storage

-

Type index

Type-based resource discovery

Notable SPARQL engines

-

SPARQL endpoint as a service

-

Open-source engine in Java

-

Open-source engine in C

-

Open-source engine in TypeScript, which focus on decentralized querying

Popular public SPARQL endpoints

Popular biological SPARQL endpoints

Federation over multiple SPARQL endpoints

Data across multiple datasources can be joined in a single query

-

Joins DBpedia, VIAF, Harvard Library

-

Joins Uniprot and Rhea

-

Joins Uniprot, Rhea, and ChEMBL

Example Link Traversal queries

Discover sources on the fly.

-

Queries over real-world and synthetic environments

Conclusions

-

Biological Knowledge Graphs use (Semantic) Web technologies

RDF, SPARQL, …

-

Knowledge Graphs can be interlinked

Thanks to RDF's global identifiers

-

Federated querying

Combining data across multiple Knowledge Graphs

Complex queries can be slow

Ongoing research

-

Developing the Comunica query engine

SPARQL 1.2, optimization, Comunica Association, …

-

Optimizing query processing

Federated querying, link traversal, personalization, …

-

Neurosymbolic AI

Connecting AI agents with Knowledge Graphs (Comunica MCP)

-

Personal genomics

(Personal) Knowledge Graphs + genomic data

Combining Personal Knowledge Graphs with Genomic Medicine

PhD topic Elias Crum (collaboration with VITO - Bart Buelens, Gokhan Ertaylan)

-

Genomic Sequence Data Sharing for Clinical Practice

A review on why we do not see more genomic data usage

Published in Computers in Biology and Medicine

-

Towards storing Personal Genome Pods

Genomic data is stored in personal data pods

-

Privacy-preserving querying

Analyses within one or multiple genomes: privacy-preserving

Linking with other types of data (e.g. pharmacogenomics)